In May, U.S. Secretary of Education Arne Duncan visited Detroit Public Schools' Thirkell Elementary to honor the school for its performance. After visiting the school, Duncan said he was "very, very encouraged" by the progress made at Thirkell and other DPS schools.

In July, the Mackinac Center for Public Policy identified Thirkell as the highest-performing elementary or middle school in the state on its Context and Performance report card. The report card takes student background into account, and Thirkell, where nearly all students come from needy backgrounds, was posting impressive academic results.

Just two weeks ago, Excellent Schools Detroit gave Thirkell one of the highest grades on its review of Detroit-area schools. Excellent Schools Detroit used student test scores and unannounced school visits as part of its grading system.

But recently, the state of Michigan effectively gave Thirkell an "F." On the state's report card, Thirkell was ranked in the bottom 1 percent of Michigan schools, according to the state.

How could this happen? There is no question that the U.S. Secretary of Education, Excellent Schools Detroit, the Mackinac Center and the Michigan Department of Education are trying to identify the same thing — high-quality schools. And yet, the state's report card appears way off the mark.

Sure, it is possible that Thirkell parents, students, community members and three third-party observers are mistaken, and that the school really is a failure. But it is more likely that the state isn't taking reality into account.

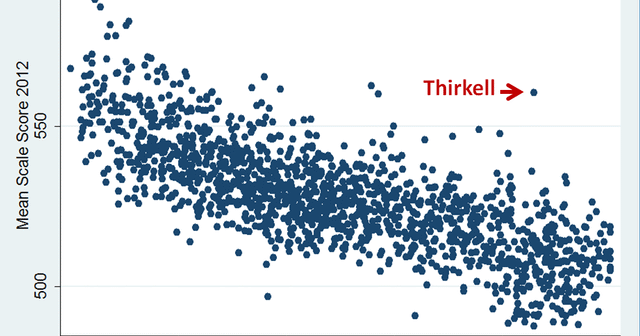

As shown in the graphic nearby, student test scores are closely related to student poverty. In the graphic, schools MEAP scores for fifth graders are plotted against the percentage of fifth graders eligible for free lunch. The clear downward slope shows that schools that serve more disadvantaged students tend to post lower scores. (As shown, Thirkell is doing far better than expected.)

This reality will not surprise education officials, teachers, researchers or parents. And yet, the state report card does not take student background into account. Half of a school's Top-to-Bottom rank depends on absolute test scores, which can be influenced by many things outside of a school's control.

It is true that a quarter of a school's rank on the Top-to-Bottom list is based on student academic growth. However, that is not enough to offset the large portion that is based on student proficiency, and some studies have shown that academic growth can be related to student background as well.

School officials might argue that accounting for student background is unfair, and provides lower expectations for students. That argument isn't relevant here. The purpose of the state report card is to provide accountability for public schools, not to set expectations for students.

This report card is what the state uses to send a signal to schools that are performing poorly and to recognize schools that are posting impressive results. Such a system is necessary because the state does not allow parents wide ranging choice: Many conventional schools have an assured level of enrollment regardless of performance, simply because parents do not have another available option.

By not considering student background, the state's ranking may be sending some schools the wrong signal, and may even penalize schools that enroll more disadvantaged students.

In a 2013 paper for the Calder Center on the importance of taking student background into account when judging school and teacher quality, economists Mark Ehlert, Corey Koedel, Eric Parsons and Michael Podgursky write that:

It is difficult to understand how a system that ignores [student background] and attempts to signal to all (or nearly all) disadvantaged schools that they must perform better will help improve instruction.

In fact, they note, such a grading system could make things worse:

A potentially harmful consequence of [a system that does not consider student background] is that it could result in a perpetuating cycle of the destruction and re-invention of instructional practices at disadvantaged schools, whether these practices are effective or not.

In their paper, the economists show an example of two schools, one they call "Rough Diamond" (a school with disadvantaged students that is doing better than similar schools), and the other they call "Gold Leaf" (a school with advantaged students that is doing worse than similar schools). They show that various measures of school quality can result in different grades for these schools.

They write: "A concrete question that should be at the forefront in the design of the evaluation system is this: What signal should be sent to Rough Diamond?"

The Michigan Department of Education's ranking system risks sending negative signals to Michigan's "rough diamond" schools. Is that really what these schools need?

Permission to reprint this blog post in whole or in part is hereby granted, provided that the author (or authors) and the Mackinac Center for Public Policy are properly cited.

Get insightful commentary and the most reliable research on Michigan issues sent straight to your inbox.

The Mackinac Center for Public Policy is a nonprofit research and educational institute that advances the principles of free markets and limited government. Through our research and education programs, we challenge government overreach and advocate for a free-market approach to public policy that frees people to realize their potential and dreams.

Please consider contributing to our work to advance a freer and more prosperous state.

Donate | About | Blog | Pressroom | Publications | Careers | Site Map | Email Signup | Contact